I didn't expect many people to agree with me on the issue of

web application development walking the plank.

Why?

For one, if I'm right, it's something which no one wants to hear. Further, if what I said is correct, and it's a novel concept, most people will not yet be of that opinion. Backlash would occur naturally. On top of that, there's always the possibility that what I said is completely asinine.

Despite my expectations of imminent flaming, however, the people who responded raised some excellent points, which I'd like to address here, taking the opportunity they presented to clarify my initial thoughts.

First, it would be helpful to answer the question, "what do I mean when I say 'mostly fairly trivial?'"

Mostly: Most web applications.

Fairly: For each web application included in "mostly," it is trivial

to a reasonable degree.

Put together, I would say "most parts of of most web applications are trivial."

I must have spent too much effort putting the focus on application generation, because by far, the biggest objections were there:

Barry Whitley noted,

The be-all end-all self building framework/generator has been the holy grail of software development since its inception, and it isn't really much closer to achieving that now than it was 20 years ago.

Along those same lines,

Mike Rankin brought up CASE tools:

Anybody remember CASE (Computer Aided Software Engineering)? It was sold as the end of software development. To build an application, a layperson would just drag and drop computer generated components onto a "whiteboard" and connect them up by drawing lines. CASE was THE buzzword in the late eighties. 20 years later, it's nowhere to be found.

Software development will become a complete commodity the moment business decides to stop using their systems as a way to gain a competitive advantage.

To be clear, although I believe

some applications can be entirely generated, I don't pretend that anywhere near most of them can. However, I do think that most parts of most web applications fall into that category.

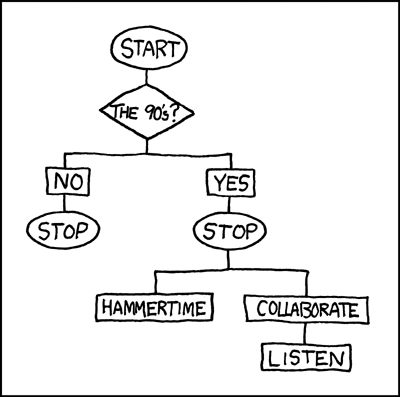

Getting back to programming-by-wizard, at one point (very early) in (what you might call) my career, I programmed in

G. This consisted mostly of connecting icons in what amounted to creating ridiculously complex flowcharts.

I think that's close to what many people envision as the "programmer killer" when they hear someone saying there will be one. But having used that style for a couple of months, I can issue my assurances that won't be it.

In fact,

as Mike said, since competitive advantage dictates businesses will continue innovating processes that will need to be codified in software, it's guaranteed there will always be software to write.

It's not a question of whether there will be software to write - it's a question of how much of it is there to go around for how many programmers, what skill-level those programmers need to be at, and what those applications will look like and run on.

Whereas a decade ago a team of programmers might have built an application over several months, we're at a point now where a single programmer can build applications of similar scope in days to weeks. We've even got time to add all the bells and whistles nowadays.

Within

an application, we need fewer programmers than we did in the past. To stay employed, you need to learn how to use the tools that abstract the accidental complexity away, in addition to learning new types of things to do.

Barry puts it well:

As for the skills required, I'd actually argue that the workplace is demanding people with MORE skills than ever before. There is a lot of crap work for sure, and that market is dying out. For companies that want to be serious players, though, the demands are higher than they've ever been.

Indeed. That's what I'm talking about.

The repetitive tedious stuff is going to be generated and outsourced. But there are shitloads of people still doing the tedious stuff. And there are shitloads of capable programmers who can glue the rest of it together.

We don't even need to get into the discussion of what will supplant the web and the number of jobs that will need to move around. The marketplace won't support us all at our high salaries. To be around in the future, you're going to need to do a better job of coping with change than the mainframe and green-screen programmers who

won't find a job now. You're going to need to be capable of picking up new technologies, and the knowing the principles behind them will help you do it. Knowing how to build and design complex systems to solve complex problems is where you'll need to be. This is in contrast to being given specs and translating them into the newest fad-language. That's what professor Dewar was getting at, and that's what I'm getting at.

I don't expect most of the readers here will need to worry. Not because of anything I've done, necessarily, but because it seems like most of you embrace change.

I'm just sayin', that's all.

Any other thoughts? I'd love to hear them.

Hey! Why don't you make your life easier and subscribe to the full post

or short blurb RSS feed? I'm so confident you'll love my smelly pasta plate

wisdom that I'm offering a no-strings-attached, lifetime money back guarantee!